How AI Is Turning Surgery From Art Into Science

Date

April 10, 2026

Credits

.jpg)

Date

April 10, 2026

Credits

Medical providers featured in this article

In Brief

- Surgical outcomes have long been disconnected from day-of-surgery decisions. Quality-of-life impact is poorly understood and largely accepted as inevitable.

- AI-driven video analysis can identify which surgical gestures matter most, predicting long-term outcomes more accurately than traditional clinical factors alone.

- Once gestures are measurable, they can be taught and improved, shifting surgery from inherited technique toward evidence-based practice across specialties.

During a single operation, surgeons make thousands of decisions. Until recently, medicine had no practical way of knowing which of these decisions mattered most to patient outcomes.

This gap has real consequences, especially in post-surgical quality-of-life. However, new research at Cedars-Sinai demonstrates that compromise is not inevitable, specifically in prostate cancer procedures. Following meticulous analysis of surgical video, investigators have identified precisely how surgical gestures might correlate with a patient’s recovery.

“For all the decades we’ve been doing surgeries, we’ve had no way to link what happens on the day of the surgery directly to the outcome,” said Andrew Hung, MD, a professor of Urology and Computational Biomedicine at Cedars-Sinai. “Now, we can use AI tools to help us analyze a surgery in tiny increments to determine which decisions affect the outcomes.”

Surgery has long been taught as tradition, with techniques passed from mentor to mentee like lore. Hung’s work marks a shift from surgery as purely an inherited craft to surgery as a measurable scientific practice with the potential to improve outcomes that dramatically shape patients’ lives. AI doesn’t dictate how surgeries should be performed, but it does provide the ability to analyze thousands of movements and decisions, allowing visibility into their impact.

“We’re trying to take the reliance away from surgery as an art and refine it into a more exact science,” Hung said.

When Survival Isn’t the Only Outcome

Prostate cancer surgery provides an unique opportunity for improvement: While prostate surgery often succeeds in removing a cancer, nearly 65% of men who undergo the procedure are left with long-term sexual dysfunction. This serious quality-of-life harm has persisted despite advances in surgical technique. The outcome is well documented, but the explanation has remained elusive.

“That only 35% of men regain sexual function has just been accepted as a matter of fact,” Hung said. “As a surgeon and a scientist, it’s frustrating when you cannot predict why one patient has a good outcome and another doesn’t.”

In an attempt to uncover hidden variables, Hung’s team examined prostate cancer surgery not as a single event, but as a sequence of thousands of individual actions, or surgical gestures.

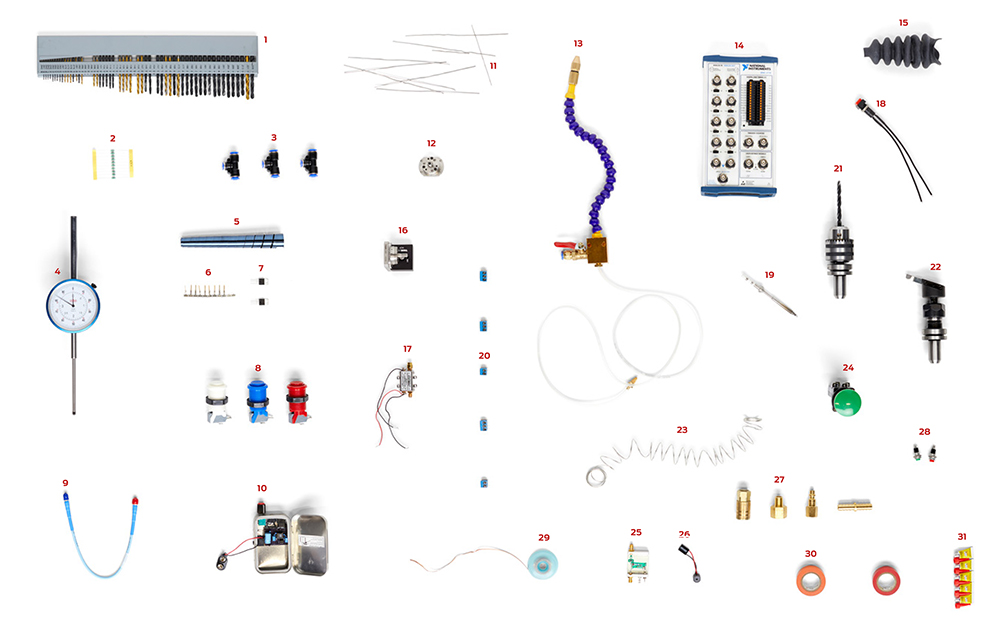

In a 2022 study published in npj Digital Medicine, the team analyzed 80 nerve-sparing robotic prostate cancer surgeries performed by 21 surgeons across two international medical centers.

More than 34,000 individual surgical gestures were identified and classified in painstaking manual annotation. Human reviewers watched surgical videos second by second, labeling each gesture before feeding the data into statistical or machine-learning models.

Investigators found that peeling and pushing gestures that gently separate tissue during the procedure were associated with recovery of erectile function within one year of surgery, while techniques using heat were more likely to be associated with loss of sexual function.

They discovered that the outcome of certain gestures depends on the surgeon’s experience—so technique and context matter, in addition to the tool that is used.

The team applied machine-learning models that were trained on gesture sequences alone without access to patient age, tumor characteristics or other traditional predictors. The models predicted one-year erectile function recovery more accurately than traditional clinical factors such as age, prostate-specific antigen levels or tumor characteristics.

This study provides a powerful proof-of-concept, but analyzing each video by hand, seconds at a time, presents a serious limitation. Hung and team have since applied AI to analyze video directly, allowing for a larger sample and the potential to expand to more types of surgeries.

Real-Time Intelligence

Modern operating rooms have long captured video, instrument motion and timing, but the data were rendered essentially inaccessible. The sheer volume of detail was impossible to analyze, and the long delays between surgeries and outcomes made meaningful connections difficult to draw.

Previous studies on surgical performance relied on statistics, which required researchers to decide in advance which variables to measure and test. The approach works well for factors like age, tumor size or lab values, but it does not capture the complexity of surgery and how hundreds of small, sequential decisions and movements interact over time.

Now, using advances in computer vision, Hung’s group has developed AI systems that can watch surgical videos, automatically identify gestures and analyze their sequence without human labeling. What once took weeks of manual work can now be achieved in minutes, across far larger datasets.

By analyzing gesture sequences alone, without patient demographics or tumor characteristics, the team’s machine-learning models can now predict one-year recovery of erectile function with up to 85% accuracy. The models also can highlight which parts of the surgical sequence are driving those predictions, pointing researchers toward specific techniques that warrant closer clinical scrutiny.

For the first time, the minute-by-minute decisions made in the operating room are no longer obscured in impenetrable troves. They are measurable and teachable.

A crucial concern of AI integration is whether it risks flattening a surgeon’s judgment or replacing it entirely.

Hung is clear: AI is not an authority, but a precise observer capable of noticing relationships across hundreds of procedures and years of outcomes.

“AI does not replace surgeons,” he said. “It cannot tell you what to do. It is effective for showing you patterns that you might never see on your own.”

Surgery is defined by nuance and variation. No two patients have identical anatomy, and no two operations unfold in the same way. AI-generated insights are only useful when interpreted through clinical expertise, Hung said.

When AI models identify consistent associations between specific surgical gestures and long-term outcomes, investigators see a starting point for inquiry—not an instruction manual.

Surgeons must still decide how to apply insights, when to make exceptions and how patient-specific factors shape each case.

“Prediction alone isn’t enough,” Hung said. “Surgeons must look at what the model is seeing and decide whether it makes clinical sense.”

Measurable Becomes Teachable

Once surgical performance can be measured, successful technique can be shared, tested and taught in new ways. For generations, surgical techniques have been passed from mentor to trainee, shaped by experience and repetition. While this model produced highly skilled surgeons, it also means that best practices are often difficult to define—and even harder to standardize.

“What we’re really trying to change is how we teach surgery,” Hung said.

Rather than relying solely on tradition and apprenticeship, surgeons can begin to learn from large-scale, data-driven evidence about what actually improves patient outcomes—a potential revolution in how surgeons are trained. By breaking procedures into measurable gestures and linking those gestures to outcomes, Hung’s team is creating a framework for evidence-based surgical education.

The approach also opens the door to improvement across institutions. Because Hung’s research draws on data from multiple hospitals and surgeons nationwide, its findings are not tied to a single center or style of practice. The breadth of information strengthens the conclusions and makes them more broadly applicable.

And while prostate cancer surgery remains a central focus, the model is not limited to one specialty. Hung’s team has already partnered with surgeons in general surgery to investigate procedures with similarly divergent outcome gaps.

For example, at Cedars-Sinai, Hung is collaborating with Miguel Burch, MD, chief of Minimally Invasive and Gastrointestinal Surgery and the Jim and Eleanor Randall Chair in Surgery in honor of Edward H. Phillips, MD, to apply the analysis to hiatal hernia surgeries. These procedures correct a condition in which the stomach has risen above the diaphragm and can lead to gastroesophageal reflux disease symptoms. Despite more sophisticated repair or esophagus-lengthening techniques, a high recurrence rate of symptoms or the hernias themselves has vexed surgeons and patients. Hung and Burch are now using AI to examine surgeries and identify how to improve outcomes.

AI provides surgeons, for the first time, with a way to systematically connect actions in the operating room to how patients life after surgery.

“We’re focused on real problems—procedures where outcomes haven’t improved despite decades of effort,” Hung said. “We can see and analyze the smallest decisions we make in surgery—they’re not invisible anymore,” Hung said. “Now, we can use them to achieve better outcomes for our patients.”